Scrum was built for empiricism: inspect, adapt, and deliver value incrementally. That core is not what needs to change.

What changes is the mechanics — because the system constraints changed. AI is moving the main bottleneck from implementation capacity toward decision quality and judgment. The ceremonies, rhythms, and role expectations built around the old bottleneck need to be recalibrated around the new one.

This is a journey, not a switch. Most teams are somewhere in the middle of it, figuring out what responsible AI-assisted delivery looks like in their context. The adjustments below describe the direction of travel — and how to start moving without a big-bang reorg.

What doesn't change

Before the adjustments: the Scrum Guide's core that AI doesn't alter — and that actually strengthens under faster delivery.

Someone must decide priorities, trade-offs, and what success means. One product creator, one backlog. AI running without this produces fast noise, not fast value.

AI can overbuild quickly. Smaller validated changes are safer than large batches — the principle strengthens as generation speed increases.

Fast code generation doesn't replace feedback from real users and business owners. Generated output can look complete and be wrong.

If delivery speed increases, process flaws compound faster. The inspect-and-adapt habit becomes more valuable, not less.

The cycle tightens — it doesn't disappear. Teams with strong feedback habits are better positioned to tighten it safely.

The six adjustments

Two-week delivery sprints as the default rhythm, evolved from monthly release cycles.

Separate delivery cadence from inspect-and-adapt cadence. The sprint as a reflection rhythm survives; as a delivery gate it gradually doesn't.

Monthly sprints are too slow for most AI-assisted work. Two-week sprints still make sense in regulated, large-coordination, or early-AI environments. For smaller AI-enabled teams, rolling weekly cadences or continuous flow become more appropriate over time.

Practical model (a destination, not a prescription):

- Continuous delivery — increment production, agentic engineering, Steward review by risk level

- Weekly — prioritisation review (30–60 min) and stakeholder review

- Monthly — outcome review and retrospective

- Quarterly — strategy and architecture review

Status round-robin. "What did you do yesterday, what will you do today, any blockers?"

Decision and judgment sync. From reporting to steering — what did the system produce, what needs a human decision?

When much of execution happens through automated pipelines, the daily conversation shifts to: what changed in production, evals, or customer signals? What is blocked by decisions, not tasks? Which agent-generated work needs human review? Are we accumulating hidden quality debt?

In practice: a written update feed by default, AI tooling providing real-time visibility. Working hours shift toward judgment-first work — reviewing generated output, correcting guardrails, defining the next experiments. Live syncs reserved for blockers and decisions. Teams that already ran focused standups will find this a natural evolution.

Story warehouse — a queue of requirements, tickets, and tasks. Long backlogs representing months of planned work.

Decision inventory — a portfolio of outcome hypotheses, user problems, and architecture decisions. Items structured for AI execution.

When building is cheap, the expensive part is choosing what to build. The backlog should reflect that — harder to add to than it is to build from.

Contents of a mature AI-era backlog:

- Outcome hypotheses: what change in user behaviour or business result are we expecting?

- User problems: not "implement X" but "users cannot do Y, which costs Z"

- Architecture decisions: explicit constraints AI execution must respect

- Risks and unknowns: things to learn before building confidently

- Compliance and security requirements: non-negotiables designed in, not bolted on

- Agent-ready execution tasks: only once the above is clear

Product organisations with strong outcome focus are already closer to this. Teams that ran story factories will feel the gap more acutely as AI adoption deepens.

Specialised roles in a relay-race — PO writes, designer designs, developer implements, QA tests, Scrum Master facilitates.

The DevOps shift-left pattern, extended. Execution moves left to the Product Creator; Stewards provide the guardrail layer that makes the shift safe.

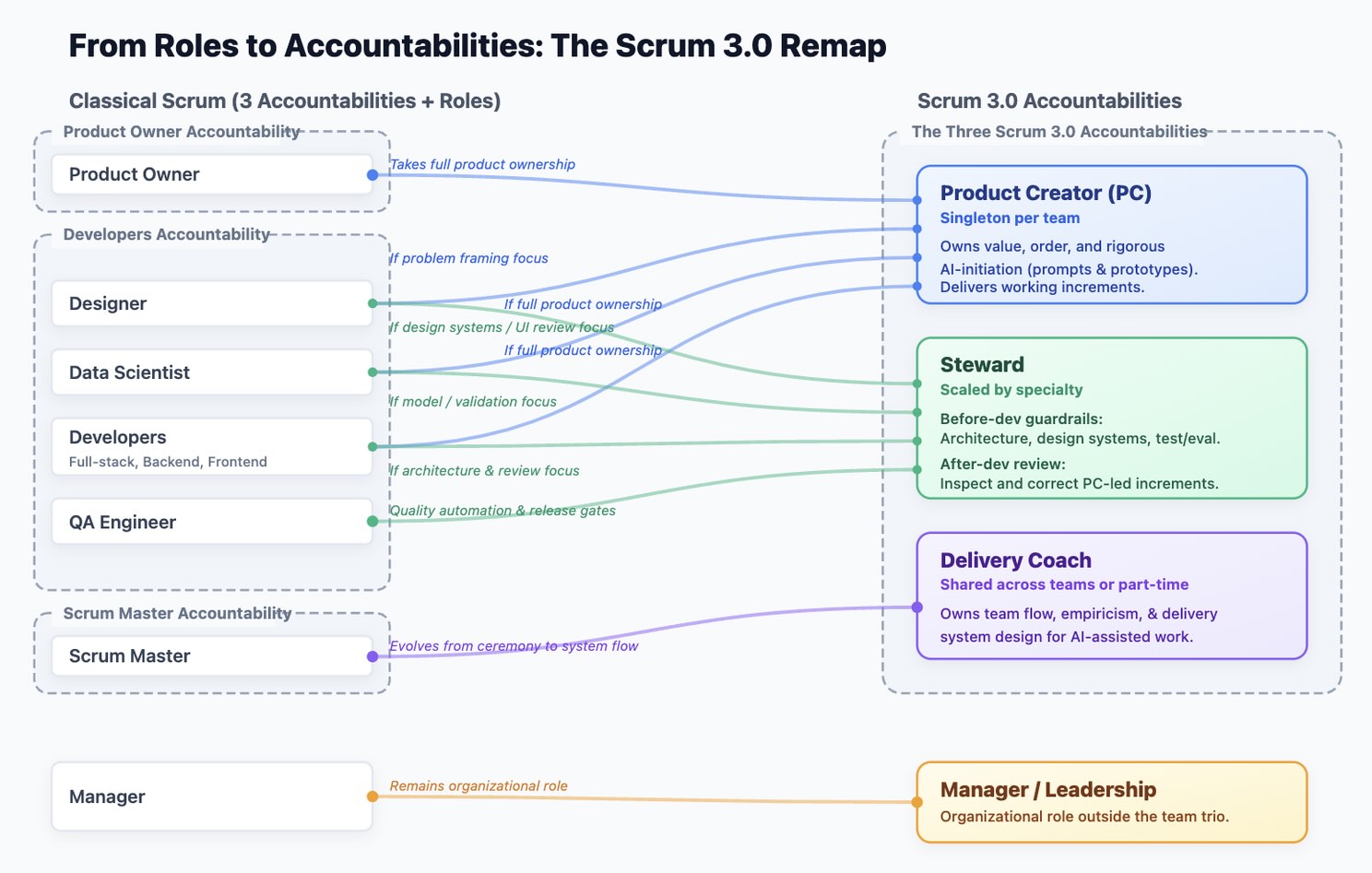

The Scrum Guide's three accountabilities remain — the skills and expectations within them evolve. Nobody's role disappears; they compress and level up.

Product Creator — was Product Owner. Still the single authority on product goal and priorities. Now also owns how AI execution is initiated: prompt standards, context packaging, bounded and testable initiations. Direction: more technical fluency over time.

Steward — was Developers. The guardrail layer. Before dev: architecture, design systems, test strategy, security baselines. After dev: reviewing and correcting PC-led increments. Scaled by specialty as increment volume grows.

Delivery Coach — was Scrum Master. Restored to its original intent: team flow, delivery system design, empiricism. Applied to a faster-moving, AI-assisted system.

Velocity, story points, sprint burn-down, man-hours. Optimising for output visibility.

Validated throughput. AI inflates output without inflating value — making output metrics actively misleading as adoption deepens.

Better signals over time:

- Lead time from idea to validated production change

- Deployment frequency and change failure rate

- User outcome improvement

- Experiment learning rate

- Rework rate on AI-generated artifacts

- Architecture health indicators

Teams that already measured outcomes alongside output will find this transition easier. The metrics need to catch up with the practice that outcome-focused orgs already developed.

Code merged, tests passing, QA signed off.

Stronger, not weaker — because generation speed can hide weak quality. Review discipline matters more as output volume increases.

A modern Definition of Done:

- Second-pair-of-eyes review before release — not optional

- Automated tests passing, including AI evals where relevant

- Security and static checks passing

- Observability added for production-facing changes

- Rollback path in place

- Acceptance criteria validated against real behaviour, not just generated code

How to start moving

This rarely works as a big-bang reorg. A safer sequence that respects the multi-year journey ahead:

The risk isn't moving too slowly. The risk is moving fast on execution without building the guardrail layer that makes fast execution safe.

AI-first delivery without explicit governance, Steward review, and quality automation isn't faster Agile — it's faster chaos. The speed gain requires the safety net. That's been true of every shift-left movement in software, and it's true here too.

The organisations that build the guardrails alongside the capability — not after — will be the ones that sustain the acceleration.

- The Agile Coach Is Back — Your Transformation Will Continue

- How Roles Change in an AI-First Scrum Team

- Your Iteration Speed Is Growing. Your Process Isn't.

- Treat Scope Differently in the AI Era

- → AI Demands Adjustment on Your Scrum (this page)

Want to work through this together?

The Pionäär Framework provides systematic methodology for this kind of calibration — figuring out what to keep, what to adjust, and how to build the new patterns without discarding what works.