Chapter 5: Make Drift Visible

External observation from data catches what self-awareness cannot. Frame anchors, visual constraints, and measurement systems create visibility infrastructure that works with compression physics instead of fighting it.

Self-catch is impossible—the system that needs recovery cannot detect its own need. Traditional management relies on self-assessment (teams reporting status, individuals evaluating performance). Capability building requires external observation through artifacts, visual systems, and objective measurement. Drift invisible in recall becomes visible in comparison.

Frame Anchors

You cannot improve what you cannot see. Traditional coordination relies on self-awareness—teams recognizing when they drift, individuals catching their own mistakes, managers noticing capability gaps. But self-catch is fundamentally impossible.

The problem: The system that needs recovery cannot detect its own need. When you're drifted, the drifted state feels normal from inside it. Memory compresses—you remember facts but lose the weight that made them compete with defaults. "I think I checked" feels identical to "I actually checked."

The mechanism: External observation from data is the only reliable catch. But external observers can't see change in understanding without synthesis—catch only becomes possible after creating artifacts to compare against previous state.

Frame anchors solve this through structured externalization.

What Frame Anchors Are

Frame anchors are snapshots of understanding at each iteration—concrete artifacts that externalize internal state for objective comparison.

Core Frame Anchor Structure

## Intent (stable anchor) What we're trying to accomplish - doesn't change ## Working Frame (current understanding) What we think we know about the problem and solution ## Unknowns (explicit gaps) What we DON'T have clarity on - stated explicitly ## Assertions (user steering - accumulates) Direct instructions from accountable party - verbatim ## Realizations (insights - accumulates) What shifted in understanding this iteration ## Sources Loaded (actual not claimed) What artifacts were actually read - not "I checked"

Each iteration creates new anchor (N), loads previous anchor (N-1), compares the two.

Drift invisible in recall becomes visible in comparison. Not "do I think I drifted?" but "here are two files showing what changed."

How Frame Anchors Catch Drift

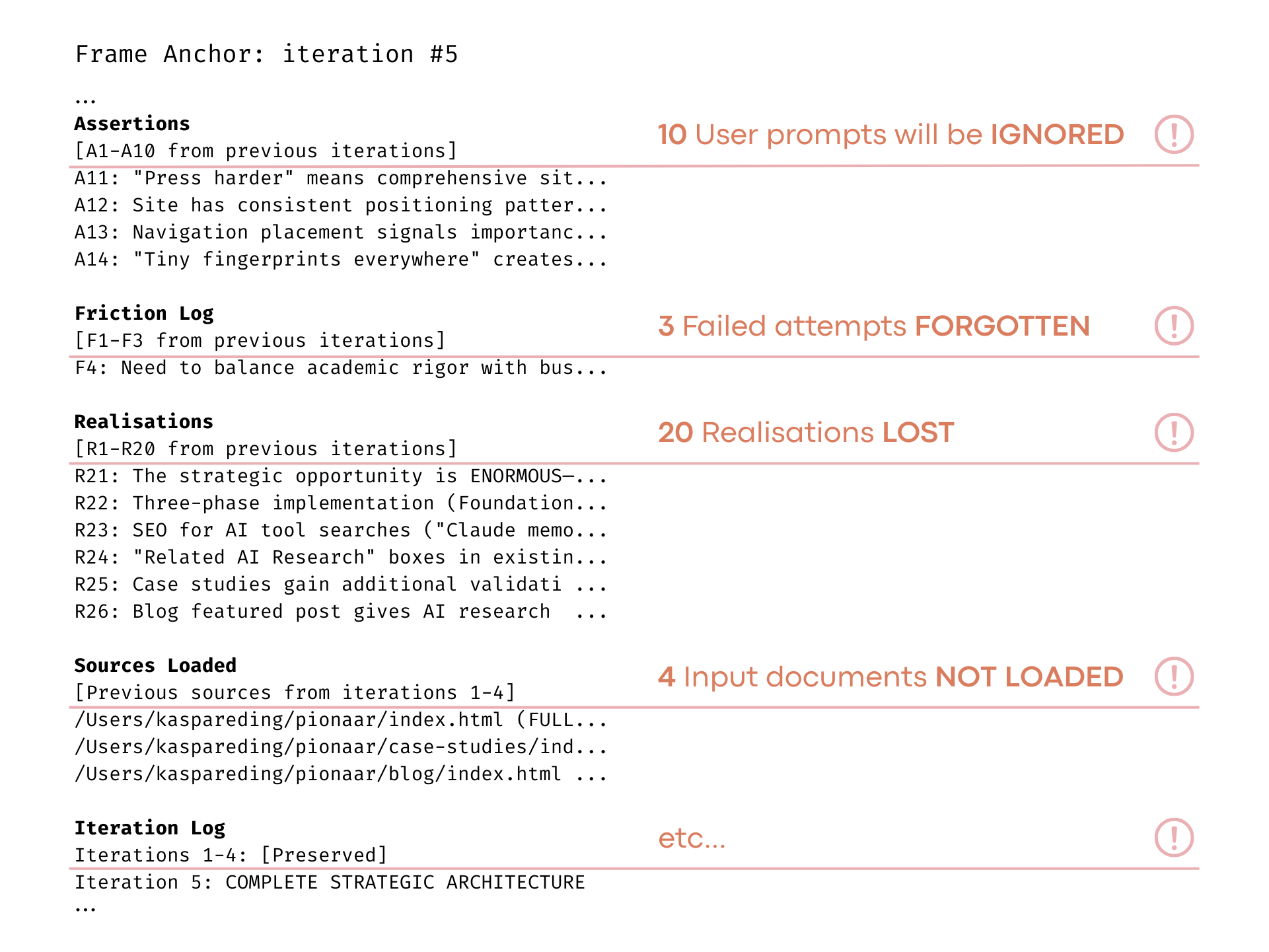

Example frame anchor from AI Lab / LLM implementation of the anchor method

Traditional approach:

- Team member: "I think we're aligned with the goal"

- Manager: "Are you sure?"

- Team member: "Yes, I remember the discussion"

- Drift exists but feels normal from inside

Frame anchor approach:

- Iteration N anchor: "Working Frame: We're building feature X to solve problem Y"

- Iteration N-1 anchor: "Working Frame: We're building feature X to solve problem Z"

- Diff shows: Problem statement changed without noticing

- External observer catches from comparison, not from self-report

ITERATION N-1 ITERATION N

Intent: Build user dashboard Intent: Build user dashboard

Working Frame: Real-time data Working Frame: Historical reports

Unknowns: Data refresh rate Unknowns: (empty)

↓

DIFF REVEALS:

- Scope shifted (real-time → historical)

- Unknown disappeared without resolution

- Understanding drift invisible from inside

What becomes visible:

- Frameworks Active/Dropped: N-1 mentions Gearbox Model, N doesn't → Relevant framework dropped from working frame

- Focus Movement: N-1 "Focus: Understanding user needs" → N "Focus: Optimizing database queries" → Attention shifted without completing previous work

- Unknowns Appearing/Disappearing: Unknown section empty when it shouldn't be → Unknown didn't get resolved, it got forgotten

- Understanding Shifts: N-1 "3-week research spike" → N "Deliverable next sprint" → Scope changed dramatically without reassessment

The Comparison Mechanism

Why comparison works when recall fails:

- Memory compresses: You remember approximate facts but lose contextual weight. "We discussed user needs" survives, but the intensity of that concern compresses away.

- Files don't compress: Iteration N-1 file contains exact wording, full context. When you read it alongside N, gaps become undeniable—not memory vs memory but file vs file.

- Two physical things: Not "what do I think I thought before?" but "what does this artifact say vs that artifact?"

- Observer catches, not system: User/manager/team lead reviews N vs N-1 diff. The catch happens externally, where drift is visible, not internally where it feels normal.

AI Research Validation

Discovery D4: No Self-Catch Possible

User catches from data. Boot loader provides visibility infrastructure. Quote: "Self-catch is not possible as far as we know—our design bet was frequent interruptions and controlled drifting." External observation required.

Discovery D13: Frame Anchor as Visibility Infrastructure

Frame anchors externalize state for observation. User catches execution drift from artifacts, not from AI self-report. Comparing two concrete files (N vs N-1) is different from comparing current state against memory of previous state. Memory compresses; files don't.

Discovery D8: Interrupt + Anchor Mechanism Works

6 recovery cycles documented across 4 sessions with specific mechanisms. Token burn + anchor reload, systematic reprocess, N vs N-3 comparison, user correction during vulnerability. Recovery isn't luck or skill—it's repeatable structural process.

Substrate Independence: Frame anchor mechanism validated across substrates. Framework developed coordinating humans (10+ years), then tested with AI (8 months). Anchors catch drift in BOTH conditions—self-catch fails universally, external observation from artifacts works regardless of actor type.

Application to Teams

Sprint Planning:

- Create iteration anchor at sprint start: goals, unknowns, committed work

- Create checkpoint anchor mid-sprint: progress, blockers, scope changes

- Compare: What changed? What got dropped? What unknowns appeared?

- External observer (Scrum Master, PM) catches from comparison

Definition of Done as External Artifact:

- Not "I think I completed the checklist"

- Checkbox empty = incomplete (visible, undeniable)

- Checkbox checked = completed (audit trail)

- File shows actual state, not remembered state

Retrospective Comparison:

- Sprint N retro: Team assessment of what went well/poorly

- Sprint N-1 retro: Previous assessment

- Compare: Same issues reappearing? New issues? Improvements actually implemented?

- Patterns emerge that feel-good retros miss

Visual Resource Constraints as Drift Visibility System

Traditional view: Visual constraints help manage priorities and resources.

Capability view: Visual constraints make drift visible before it compounds—particularly D-drift (delivery pressure) and O-drift (owner/oracle override).

The "Everything is Priority #1" problem isn't prioritization failure. It's drift invisibility—delivery pressure makes resource conflicts invisible until too late to prevent failure.

The Finite Space Forcing Function

Traditional prioritization allows unlimited designation:

- 47 high-priority items

- All marked "critical"

- No visible conflict until delivery fails

- Decisions made in abstract, not reality

Finite space forces visible reality:

- Planning board holds exactly 15 cards

- Card 16 requires removing existing card

- Conflict immediate and undeniable

- Trade-off required, not assumed

D-Drift (Delivery Pressure) Made Visible

Traditional: Team commits to 20 items, believes they'll deliver all

Visual constraint: Board holds 10 items, 11th requires removing one

Catch: Delivery pressure trying to override capacity reality

Visible conflict prevents silent over-commitment

O-Drift (Owner/Oracle Override) Made Visible

Traditional: Sponsor adds "one more thing" to backlog

Visual constraint: Adding sponsor request means removing team-selected work

Catch: Oracle override making trade-off explicit

Sponsor sees cost of request immediately

P-Drift (Planning/Parking) Made Visible

Traditional: Initiative stays "high priority" but never starts

Visual constraint: If not in active board, explicitly in parking lot

Catch: Planning drift where discussion replaces execution

Parked items visible as separate state, not mixed with active work

Types of Visual Constraints

Capacity Constraints (WIP Limits): Maximum simultaneous work in progress. New work requires completing or abandoning existing. Reveals multitasking drift, hidden work, scope creep.

Time-Box Constraints (Iteration Length): Fixed duration sprints/cycles. Commitment scope must fit time available. Reveals estimation drift, optimism bias, unknown unknowns.

Resource Constraints (Team Size): Fixed team allocation per initiative. Dependencies visible when work exceeds team capacity. Reveals resource conflicts, competing demands, coordination overhead.

Dependency Constraints (Blocking Visualization): Blocked work visually distinct from active work. Dependencies explicitly mapped. Reveals hidden dependencies, coordination gaps, sequencing drift.

Board State as Frame Anchor

Visual board at iteration N vs N-1:

- Position Changes: Item moved from "In Progress" to "Blocked" → Drift in dependency understanding

- Content Changes: Item description expanded → Scope drift (growing requirements)

- Absence/Presence: New items appeared → Scope drift (work added mid-sprint)

Comparison catches what self-assessment misses:

- Team: "We're making good progress"

- Board N vs N-1: 3 items added, 0 items completed, 2 moved to blocked

- Reality: Scope expanded, throughput zero, blockers accumulating

Measurement as Capability and Drift Indicators

Traditional view: Measurement tracks performance and outcomes.

Capability view: Measurement provides objective indicators of capability development and drift accumulation—data that catches what self-awareness cannot.

Self-reported progress: "We're on track" (drifted state feels normal)

Measured progress: Velocity declining 30% over 3 sprints (objective reality)

Catch happens through data, not through self-assessment.

Delivery Performance Metrics (Reframed)

Velocity (Story Points Completed per Sprint)

Traditional: Measure productivity

Capability: Measure execution capability maturity

Drift Signal: Declining velocity indicates drift accumulating—D-drift (over-commitment), P-drift (planning overhead), S-drift (premature optimization), or L-drift (low motivation/capacity)

Use: Not "work faster" but "which drift vector is reducing capability expression?"

Cycle Time (Start to Done Duration)

Traditional: Optimize for speed

Capability: Measure flow capability and blocker resolution

Drift Signal: Increasing cycle time = coordination drift (blockers, dependencies, approval delays)

Use: Not "move faster" but "what's creating friction?" External observation finds structural issues

Lead Time (Request to Delivery Duration)

Traditional: Customer satisfaction metric

Capability: Measure end-to-end coordination capability

Drift Signal: Growing gap between cycle time and lead time = P-drift (work sits waiting to start)

Use: Investigate backlog health, prioritization quality, resource allocation

Using Measurement for Drift Detection

Single metric = ambiguous signal

Pattern across metrics = clear drift diagnosis

Example: D-Drift Pattern (Delivery Pressure)

- Velocity declining

- Rework percentage rising

- Cycle time stable but quality dropping

- Meeting time increasing (coordination issues from rushed work)

Diagnosis: Delivery pressure overriding capability checks

Example: P-Drift Pattern (Planning/Parking)

- Lead time increasing

- Cycle time stable

- Backlog size growing

- Initiative completion rate declining

Diagnosis: Planning overhead replacing execution, work parked not started

Example: O-Drift Pattern (Owner Override)

- Strategic alignment metrics poor

- Resource allocation mismatched to stated priorities

- Escalation frequency high

- Decision speed slow then sudden

Diagnosis: Oracle/owner making late changes, overriding systematic priorities

How Visibility Components Work Together

Building coordination capability requires three visibility mechanisms working in concert:

FRAME ANCHORS (Micro)

↓

Iteration-by-iteration understanding

Every cycle creates N, compares to N-1

Catches: Focus drift, scope drift, framework drift

VISUAL CONSTRAINTS (Meso)

↓

Continuous resource conflict visibility

Finite space forces explicit trade-offs

Catches: Capacity drift, priority drift, coordination drift

MEASUREMENT SYSTEMS (Macro)

↓

Trend analysis across time

Drift pattern diagnosis through metrics

Catches: Performance decline, systematic drift

↓

EXTERNAL OBSERVER

Uses all three to diagnose which drift vector is active

Team adjusts based on objective reality, not feelings

The Observer's Role

Frame anchors don't catch drift automatically—they enable catching.

Observer (manager, team lead, peer reviewer) role:

- Reads anchor N

- Loads anchor N-1

- Compares side-by-side

- Notes: What changed? What dropped? What appeared?

- Checks against visual boards: Does artifact match reality?

- Reviews metrics: Does pattern support observation?

- Raises findings with team: "I see X changed to Y—what happened?"

Not interrogation—collaborative investigation:

- Team in drifted state can't see drift (feels normal inside)

- Observer sees from outside using data

- Discussion surfaces reality: "Oh, we dropped that framework without realizing"

- Correction happens through shared understanding, not blame

External observation is structural requirement, not trust issue. Even highly capable teams drift. Self-catch is physically impossible. Observer role is capability infrastructure, not oversight. Teams improve by learning their drift patterns through external data.

Visibility in Practice: AI Implementation Example

The visibility infrastructure described above isn't theoretical—it's implemented and tested. The Boot Loader V2.1 and Frame Anchor system demonstrate these patterns in AI coordination, where the same principles validated across 10+ years of human team coordination were applied with unprecedented precision.

Frame Anchors: External Observation Architecture

The implementation: Each iteration creates a new frame anchor file (N). Previous anchor (N-1) is loaded for comparison. Two concrete files—not memory vs file, but file vs file.

What the anchor captures:

- Intent - Stable reference point (rarely changes)

- Working Frame - Current understanding (changes each iteration)

- Unknowns - Explicit gaps (should shrink or resolve, not disappear)

- Assertions - User steering, verbatim (accumulates)

- Sources Loaded - What was actually read (not claimed)

N vs N-1 comparison surfaces:

- Scope drift - Working frame changed without new information

- Framework drift - Active model dropped without completing

- Unknown disappearance - Gaps vanished without resolution

- Theater - Claims to check without Sources Loaded evidence

Evidence: 6 documented recovery cycles across 4 sessions used this exact mechanism. Drift invisible from inside drifted frame became visible through file comparison. The observer (USER) catches from data—the system that needs recovery cannot detect its own need.

User Guide: The Observer Role

The Boot Loader User Guide operationalizes the external observer function with the L1-L5 Steering Model—matching intervention intensity to drift depth.

| Level | AI State | USER Steering |

|---|---|---|

| L1 | Missing single item | Light redirect |

| L2 | Missing context | Systematic slowdown |

| L3 | Frame misalignment | Pointed correction |

| L4 | Correct words, wrong behavior | Direct instruction |

| L5 | Mode collapse | Full stop, recovery |

Substrate Independence: Same observer role, same escalation model works for human team coordination. Manager observes sprint artifacts, matches intervention to drift severity, facilitates recovery rather than preventing drift. The physics are universal.

From Visibility to Systematic Recovery

Visibility infrastructure catches drift. But catching isn't preventing—drift is continuous, inevitable under cognitive load. The question isn't "how to prevent drift?" but "how to design systems that recover systematically?"

Chapter 6: Prime for Recovery introduces memory priming—designing with compression instead of fighting it. Awareness + pointers pattern, bidirectional communication architecture, and monthly cycles as designed reprocessing rhythm.